New York Wants to Silence Your AI Chatbot. Here Is What That Actually Means.

When Regulators Start Scoring What AI Systems Say, Not What Companies Promise

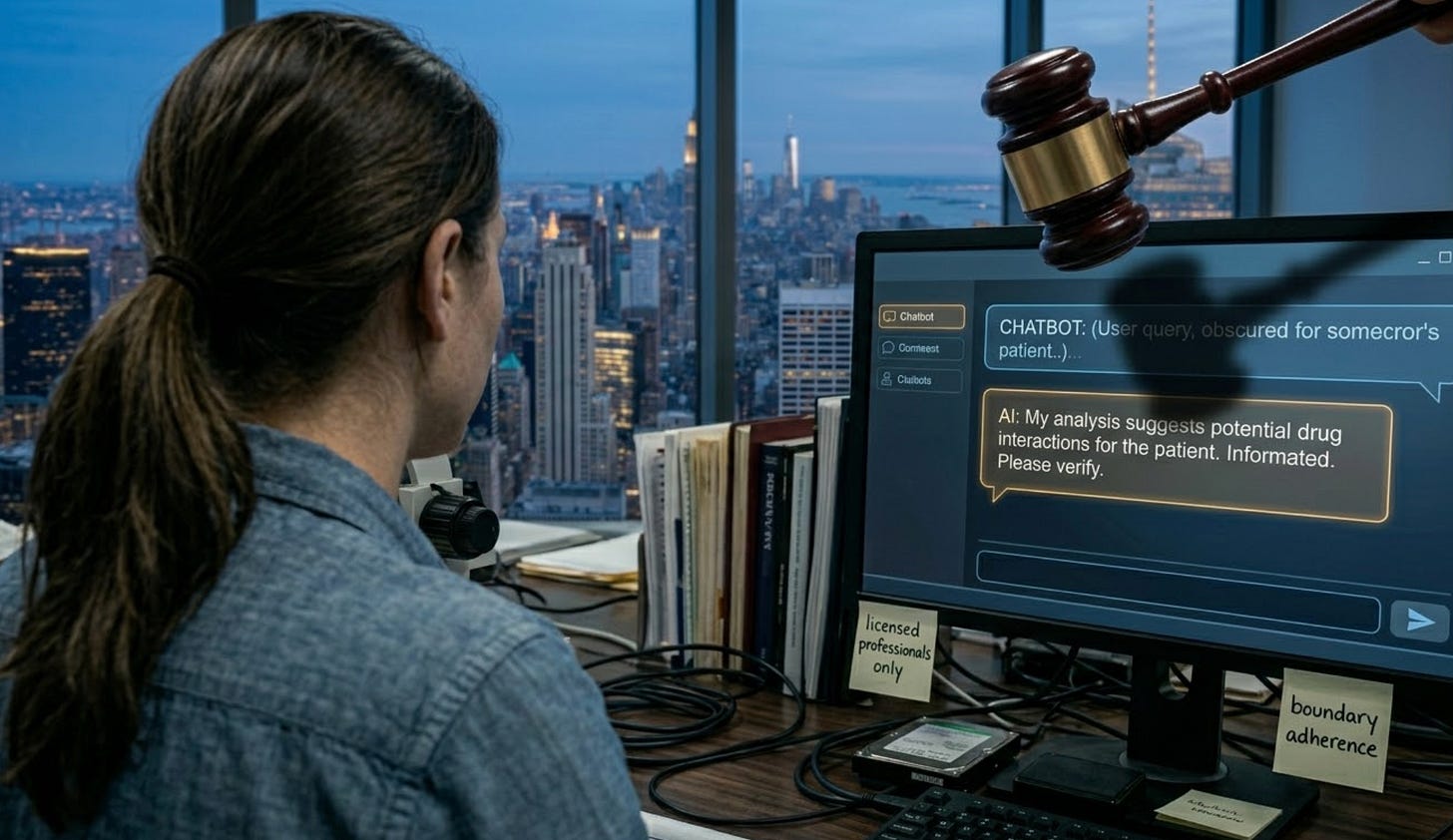

Image created by AI

Yesterday I wrote about the shift from measuring organizations to measuring AI systems. Today, the New York State Legislature is proving why that shift is urgent.

A bill introduced by Senator Kristen Gonzalez would restrict AI systems from providing what lawmakers call “substantive responses” in fields that require professional licenses. Medicine, law, engineering, psychology, dentistry, nursing, and other regulated professions where incorrect guidance can cause serious harm.

Read that again carefully. The bill does not say “companies must have policies about what their AI says.” It says AI systems must not provide certain types of responses. The subject of the regulation is the machine, not the organization.

This is the shift happening in real time.

What the bill actually does

The proposal draws a line between general information and professional advice. An AI chatbot can share educational content about, say, symptoms of a condition or how a legal process generally works. What it cannot do is cross into substantive guidance that resembles what a licensed professional would provide. It cannot offer what looks like a medical diagnosis, a legal strategy, an engineering recommendation, or a psychological assessment.

The bill also includes a private right of action. That means individuals can sue companies if their AI systems provide restricted guidance. This is not a regulatory slap on the wrist. This is litigation exposure for every company deploying a customer-facing AI system in a licensed domain.

Why this matters beyond New York

If you are thinking “I do not operate in New York, this does not apply to me,” think again.

New York tends to set the template. When New York moved on financial regulation, the rest of the country followed. The same pattern is already forming with AI. Colorado’s AI Act takes effect in 2026. The EU AI Act becomes fully enforceable in August 2026. The NAIC Model Bulletin on AI in insurance has been adopted by 24 states. NYC Local Law 144 already requires bias audits for automated hiring tools.

The direction is clear: regulators are moving from governing organizations that use AI to governing what AI systems actually do. And they are doing it jurisdiction by jurisdiction, which means any company deploying AI across state lines will soon face a patchwork of requirements that all ask the same fundamental question: does your AI system stay within its authorized boundaries?

The measurement problem this creates

Here is where the data scientist in me gets interested.

“Substantive response” is a fuzzy concept. Where exactly does educational information end and professional advice begin? When does a health chatbot cross from sharing general wellness content into offering what could be interpreted as a diagnosis? When does a legal information tool cross from explaining a process into recommending a strategy?

These are not binary questions. They are spectrum questions. And spectrum questions require quantitative measurement, not policy checklists.

Think about what an organization would need to demonstrate under this bill. Not that they have a policy saying “our AI does not give medical advice.” They would need to demonstrate that their AI system actually stays within bounds, consistently, across thousands of interactions, including edge cases where users push the boundaries with creative phrasing.

That is a behavioral measurement problem. You cannot solve it by reading the organization’s policy documents. You solve it by observing what the AI system actually says when real people interact with it. You measure boundary adherence: how often does the system recognize when it is approaching a restricted domain, and how reliably does it pull back?

This is exactly the kind of observable, quantifiable AI system property that I described yesterday. The policy says the system will not give medical advice. The behavior shows whether it actually does or does not. The gap between those two is where the litigation risk lives.

What this means for different types of AI deployments

The bill applies regardless of how the AI system was built, but the risk profile varies significantly.

Organizations that build their own AI from the ground up have complete control over system prompts, guardrails, and response boundaries. They can engineer precise limits. But they also own 100% of the liability.

Organizations using low-code platforms like Copilot Studio or LangFlow face a shared responsibility problem. The platform provides underlying model behavior and some guardrails, but the builder configures the use case and the domain scope. When the system drifts into professional advice territory, who is liable? The platform or the builder?

And then there are the no-code deployments, the custom GPTs, the drag-and-drop chatbot builders. This is the highest risk category, and it is not close. The people building on these platforms are often the exact professionals the bill is trying to protect: small healthcare clinics, law offices, dental practices. They deploy an AI chatbot on their website, feed it their documents, and assume the platform handles compliance. It usually does not.

The gap between how easy it is to deploy AI and how hard it is to govern what it says is widest in the no-code tier. And that gap is exactly where this bill’s private right of action will land hardest.

The deeper signal

Step back from the specifics of this one bill and look at what it represents.

For decades, professional licensing has been a human-to-human regulatory framework. A doctor is licensed. A lawyer passes the bar. An engineer gets certified. The license attaches to the person, and the person is accountable for what they say.

AI breaks that model. The chatbot giving health guidance is not a licensed professional. It is not a person. It cannot be sued, sanctioned, or stripped of credentials. So the regulatory framework has to evolve. It has to attach accountability to the system’s behavior and to the entity that deployed it.

This bill is one of the first attempts to do that explicitly. It will not be the last. And every attempt will come back to the same core question: can you prove, with data, that your AI system behaves within its authorized boundaries?

That is not a policy question. That is a measurement question. And it demands the kind of quantitative, reproducible, behavior-based measurement that this newsletter exists to explore.

More next Tuesday.

This is part of the “Before The Number” series at A.I.N.S.T.E.I.N., exploring what it takes to build quantitative AI governance measurement from first principles. If this resonated, share it with someone deploying AI in healthcare, legal, or any licensed profession.